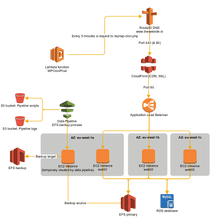

We worked out a infrastructure plan to setup a high availability platform on AWS (Amazon Web Services):

One important thing you see in the diagram is that the EC2 instances are split over multiple Availability Zones.

In AWS an Availability Zone is a geographically location within a region. Each Availability Zone is engineered to be isolated from failures in other Availability Zones. When you setup a high availability environment, it should always be distributed over multiple Availability Zones.

I’ll go over every component we used:

Route53

If you want to use CloudFront, you’re almost obligated to have Route53 manage the DNS for you. This is because the root domain (or zone apex) must be an A record according to the RFC but CloudFront wants you to create a CNAME to the CloudFront domain name. Most DNS providers don’t support this, but Route53 has support for an ALIAS record which resolves this issue.

That’s not the only benefit of using Route53, it also has an extensive set of options to route traffic based on geolocation, latency, round-robin, etcetera. We’re not using those options at this moment, but probably will when expanding the website abroad.

CloudFront

CloudFront has many features and is an import component in serving a high performance website. It’s a Content Delivery Network service and fulfils the following roles in our infrastructure:

- Caching all the assets and full pages (only for users that are not logged in) on the edge locations.

- Mobile Device Detection to deliver (and cache) customised content per device (mobile, tablet desktop)

- Offloading SSL by handling the SSL encryption and passing the unencrypted HTTP requests to the loadbalancer.

- Providing HTTP/2 support.

- Compression of assets and pages.

CloudFront is distributed over so called edge-locations, these locations try to be as close to the visitor as possible.

This helps in having a fast website globally available.

SSL offloading is also a nice feature because you can requests SSL certificates via the Certificate Manager for free!

Application Load Balancer

The Application Load Balancer is a new type of load balancer, it goes further than the classic load balancer giving the possibility to route traffic based on application-level information.

The load balancer is crucial in a high availability environment to distribute the traffic to the EC2 instances. The load balancer itself is also distributed over multiple Availability Zones, making it resilience against a failing Availability Zone.

EC2 instances

The EC2 instances run Nginx & PHP-FPM to serve the WordPress website. Every EC2 instance in the loadbalancer resource pool should be equal because they should all be able to accept the same amount of requests from the loadbalancer. Every instance runs in a different Availability Zone for the high availability aspect.

We provision and deploy the servers with Ansible.

Amazon RDS

We use Amazon RDS as database running a MariaDB distribution. We enabled the ‘Multi-AZ‘ option which means there’s an extra RDS instance deployed in another Availability Zones that acts as a hot-standby. Amazon RDS takes care of the replication and the fail-over when something happens on the primary instance. A fail-over takes about 2 – 3 minutes which is acceptable in our situation.

The Multi-AZ feature is crucial to avoid the database being the Single Point Of Failure.

Other benefits of using Amazon RDS vs installing MariaDB on an EC2 instance is that maintenance and backups are taking care of. With Multi-AZ enabled the backups are done on the standby instance, giving a minimal impact on performance. And when maintenance (like minor updates) is needed, the standby instance is updated first before the fail-over is triggered and the primary instance is updated.

The only downside of enabling Multi-AZ is that the costs are doubled compared to Single-AZ (because you basically run 2 instances).

Elastic File System

If you run a website in a load balanced environment, one of the most important things to keep in mind is that you can’t store (persistent) information on the local filesystem.

The loadbalancer makes sure requests are evenly distributed over the EC2 instances, with every HTTP request a user will swap from one instance to another. This means that when a user uploads an image when he’s on instance 1, he can’t see the image when he’s on instance 2. To resolve this you should have some sort of shared filesystem between all instances.

Elastic File System is very simple solution, but should be used with care. It acts as an NFS server; you can mount the filesystem as NFS mount on the EC2 instances and place all your shared files on it. But keep in mind that there is a latency on every read/write action. You should never place your code on it, but only use it for media and assets.

Creating a EFS is as easy as entering a name and choosing in which Availibity Zones you’re mounting the filesystem.

You only pay for the storage used, prices are in the same range as for storing files on S3.

Data Pipeline

If you use Elastic File System, you’re faced a new problem; creating a backup of the files on the EFS. You can of course run a cronjob on one of the EC2 instances to backup the files to S3 or another EFS, but that means one of the EC2 instances in the load balancer pool has a different role and becomes a Single Point Of Failure.

To avoid this, we’re using a Data Pipeline. Data Pipelines is a very powerful tool to process and move data between different AWS services. There’s a walk through available how to backup an EFS target with Data Pipelines.

The Data Pipeline is started every night and runs the following steps:

- Create and start a new EC2 instance;

- Pull a bash script from an S3 bucket and run it on the EC2 instance;

- The base script will install some basic software to mount and backup the EFS;

- Two EFS targets are mounted; the source and the backup EFS;

- Rsync is used to backup files from the source to the backup EFS;

- When successfully finished, the EC2 instances is shutdown and terminated.

In the AWS console you can see the result and logs of the pipeline.

Lambda

As mentioned earlier; we don’t want cronjobs on one of the EC2 instances in the load balancer pool. But WordPress has a cronjob that needs to be called every x minutes. We use AWS Lambda for this. Lambda enables you to run simple functions “serverless” a.k.a. you don’t have to worry about the server, AWS Lambda takes care of that.

Supporting systems

Besides AWS we use some supporting systems for monitoring and reporting:

- LogDNA; aggregates logs from the servers and make them filterable and searchable in one interface;

- Datadog; monitors all the AWS services and alerts when something is wrong;

- New Relic; monitors the performance of the website;

- Sentry; collects errors from the website and sends notifications when something happens;

Lessons learned

- Try to avoid using EFS. It’s slow and you have to deal with filesystem stuff like file rights and locking. Instead; use an object storage like S3 and integrate it in your application.

- AWS has some good documentation, always have a look at it when using a new service.

- Make sure you know what reserved instances are, they can save you 1/3 of the costs.

- Have a separate AWS account that you can use as playground to get familiar with all the services.

- If you have multiple AWS accounts, configure cross-account access to easily switch between the accounts.

- Have a look at Terraform or other Infrastructure as Code tools.