Service Discovery, yet another technique or thing to add to your stack of “Availibity tools” and “Microservices Architecture”. As a newbie to the world of internet applications, It was at PHPBenelux that I first heard about service discovery and how it can be used for to stimulate high availability of your project. Mariusz Gil gave a pretty inspiring talk about Service Discovery and configuration provisioning where he introduced me to Consul, a Service Discovery tool that is packed with lots of features we can use to dynamically discover services. But how does this work with containers? Lets find out!

What is service discovery all about?

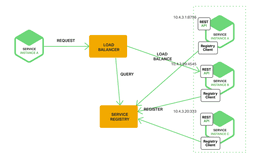

In a infrastructure where parts of the application are split up into several pieces (for example: services in a microservice infrastructure) there usually is some sort of load balancer that handles the client requests to your application. It’s vital for the application that the load balancer knows where to find the font-end of your application. If you’re doing fancy tricks like dynamically increasing or decreasing the instances of any application piece based on the load your application is experiencing, the answer to “where can i find the front-end application(s)” may vary. This is where service discovery can help the load balancer find the correct addresses for the requested “services”.

Whether you’re using server or client side service discovery. Both versions use a Service Registry which knows the locations of all services. The main difference between the client and server-side discovery is that client-side discovery all directly communicate to the service registry instead of using a separate router or load balancer. You might already be using this kind of techniques in your current infrastructure without using multiple application hosts. For instance, the AWS Elastic Load Balancer (ELB) is an example of a server-side discovery router.

More than just linking

Most Service Discovery solutions can do more then just point a system towards the correct addresses. Service Discovery tools like Consul, ETCD offer some sort of health checking mechanism. When the service is not healthy or responding the service discovery will no longer return its address and thus enabling fail-over to one of the other nodes.

Being able to dynamically identify and adjust the components of your application is the beginning of a powerful and flexible infrastructure. Image you can now do Blue-green Deployments, A/B Testing, and Canary Releases.

Lets do a demo

Lets put this thing to work! We are going to launch a service registry that will keep track on all the applications we are running. After we registered all our services, a nginx server will auto-configure itself to be the loadbalancer for the services. Sound pretty simple right? A few things to notice before starting:

- We will be using Docker for this demo because its really easy to distribute applications. If you’re not familiar with Docker, go ahead and give it a try.

- We are not trying to re-invent the wheel, the demo is based on some other tutorials which are more detailed about the actions you’ll take, have a look if you want to learn more.

- We will not be building something that is production ready. Managing and orchestrating multiple nodes requires more than just service discovery to make it all work (in a appropriate way).

- We are running this demo on a single host, so only the port numbers will vary.

To start our short demo, we are going to find the IP address for our Docker host or interface. For native Docker installations on your system, this is the address the docker interface (usually docker0, use ifconfig to confirm this). For boot2docker or docker-machine. This is the address that is used to reach that specific host. Take note on that IP, because we are going to be using it in the commands. In this case, my docker network interface is bound to 10.200.2.1. If this adres differences from your configuration, be sure to change this IP in every command we execute.

Starting the service discovery

We’ll be using Registrator backed by Consul to handle most of our service discovery needs. Why? Because for a short demo, changing multiple configuration lines just seems to much effort and registrator solves this in a painless matter. Also Consul seems like a good and promising service discovery solution.

Start the consul container by running:

docker run -d --name=sdblog_consul --net=host gliderlabs/consul-server -bootstrap -advertise=10.200.2.1Next, start our registrator by running the command below, be sure to update the IP address if applicable.

docker run -d --name=sdblog_registrator --net=host --volume=/var/run/docker.sock:/tmp/docker.sock gliderlabs/registrator:latest consul://10.200.2.1:8500Cool, what we did is start a consul server which will keep track of all the services available in our application. You can reach the consul server for example by navigation to http://10.200.2.1:8500/ui or by talking to its API like so:

curl -H "Content-type: application/json" 'http://10.200.2.1:8500/v1/catalog/services'

# Or, to get an overview of our specific hello-world service

curl -H "Content-type: application/json" 'http://10.200.2.1:8500/v1/catalog/service/hello-world'Spin up services

The adventurous among us tried the above commands and discovered that we currently have no other services available than consul. Lets change that by spinning up five of our hello world services.

for i in $(seq 5); do docker run -h sdblog_app$i --name sdblog_app$i -P -d tutum/hello-world; doneStart the load balancer

After this command, try the consul dashboard or API again and notice that it now holds data for our hello world services. There is only one step left to complete our simple architecture: the load balancer. Only this one we’ll start interactive so we can see it updating the configuration.

docker run -it --name sdblog_nginx -e "CONSUL=10.200.2.1:8500" -e "SERVICE=hello-world" -p 80:80 nstapelbroek/blog-sd-nginxThat should be all, instead of running the last command on the background, we want to see if the loadbalancer is adjusting its configuration to the available services by reading its logs. Enjoy your demo at http://10.200.2.1/ (or your own IP). When refreshing the page it should distribute the between the available application services. It might look like its skipping a node, but bare in mind that there is more than one request to involved in loading this page (the favicon, document and tutum image). If you want to spin up even more application services you can use the command below, only be sure you’re not closing your nginx by accident.

docker run --name {appname} -h {appname} -P -d tutum/hello-worldWhats next?

We now have a general idea of service discovery. Although there is much more to it than we’ve just witnessed, you can probably recognize that this enables a scalable infrastructure. Service discovery is even more powerful if you use DNS to query the services. since DNS can provide the fallback and caching methods so you don’t have to when building your application.

Our next objective should be orchestrating our application by installing some sort of “manager” that tells our services to grow or shrink based on load. But we’ll leave that for another time.